I used pytest to run this on random arrays of different dimensions, and it seems to work in all cases: import range(1, 5))ĭef test_combination_matrix(random_dims):ĭim_size = np.random.randint(1, 40, size=random_dims)Įlements = np.random.random(size=dim_size)Īssert np.array_equal(np_combs, torch_combs. Return arr.reshape(output_shape).permute(2, 1, 0, *range(3, len(output_shape))) 4,377 4 30 49 My attempt to reduce your problem: you're trying to index into an array with indices of larger dimension (and possibly size). Output_shape = (2, len(arr), len(arr), *arr.shape) # Note that this is different to numpy! Use stride and size to track the memory layout: a torch.randn (3, 4, 5) b a.permute (1, 2, 0) c b.contiguous () d a. forward(x: Tensor) Tensor source Defines the computation performed at every call. Parameters: dims ( Listint) The desired ordering of dimensions. This is the full working version: import torch class (dims: Listint) source This module returns a view of the tensor input with its dimensions permuted. You just need to flatten the indices, then reshape and permute the dimensions. Similarly, index_select only works for one dimension, but I need it to work for at least 2 dimensions. permute () is mainly used for the exchange of dimensions, and unlike view (), it disrupts the order of elements of tensors. PyTorch supports sparse tensors in coordinate format. this one with only one dimension), but could find how to apply this here. Size(2, 3, 5) > x.permute(2, 0, 1).size() torch.Size(5, 2, 3). I've read various questions on the torch forums (e.g. See below for more details on the two behaviors. Of the returned tuple, each index tensor contains nonzero indices for a certain dimension. How do I match the torch behavior to numpy? torch.nonzero (., astupleTrue) returns a tuple of 1-D index tensors, allowing for advanced indexing, so x x.nonzero (astupleTrue) gives all nonzero values of tensor x.

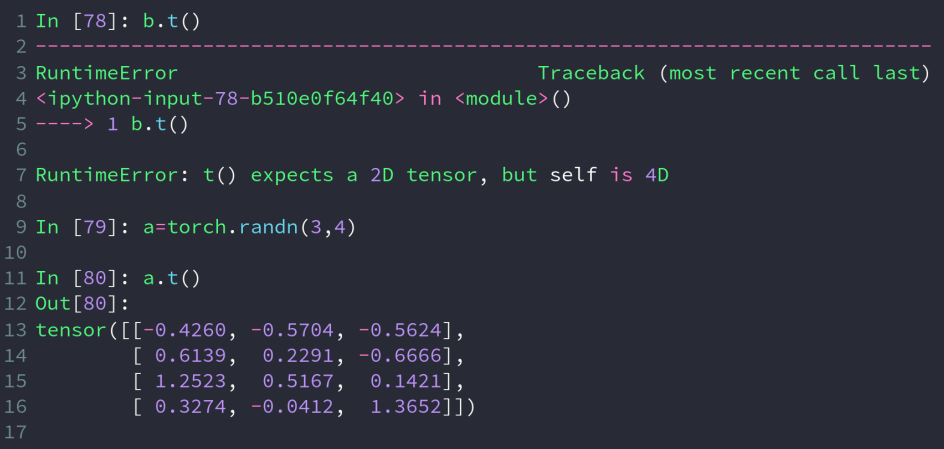

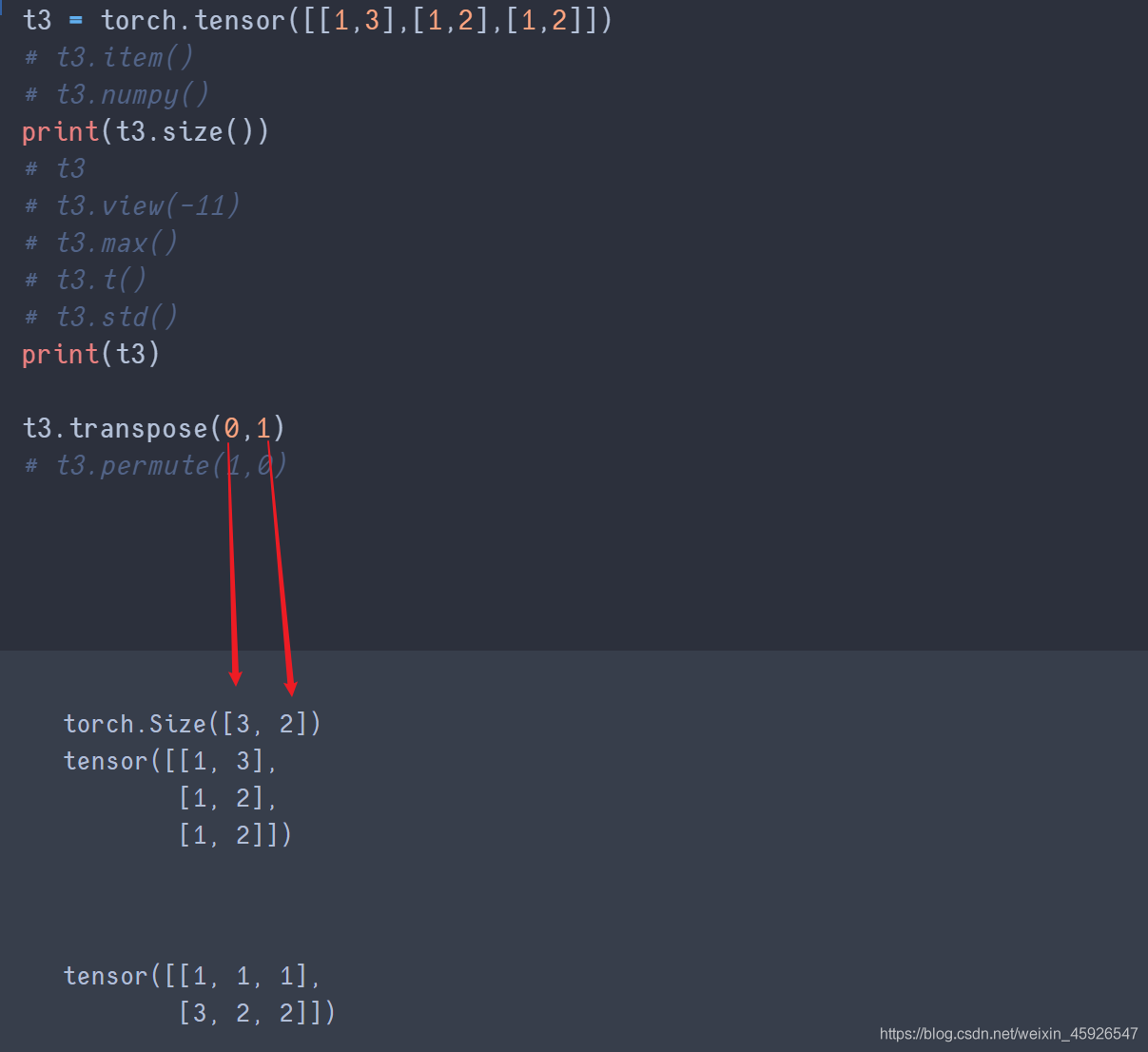

RuntimeError: number of dims don't match in permute Output = arr.permute(2, 1, 0, *np.arange(3, num_dims)) I was hoping to find the implementation for permutation, as in: a a. Torch_combs = combination_matrix(features)įile "/home/XXX/util.py", line 218, in combination_matrixįile "/home/XXX/util.py", line 212, in torch_combination_matrix Output = torch.zeros(len(arr), len(arr), 2, *arr.shape, dtype=arr.dtype) Output = np.zeros((len(arr), len(arr), 2, *arr.shape), dtype=arr.dtype) You can also use negative indexing to do the same thing as in: In : aten.I have the following function, which does what I want using numpy.array, but breaks when feeding a torch.Tensor due to indexing errors. As I understand it, permute works by changing the strides of the view mechanism. 1 Like jpeg729 (jpeg729) February 2, 2018, 7:29pm 3 I have never had to call. # since we permute the axes/dims, the shape changed from (2, 3) => (3, 2) permute changes the tensor so that it is not contiguous anymore. The below example will make things clear: In : aten Whereas tensor.permute() is only used to swap the axes.

This can be viewed as tensors of shapes (6, 1), (1, 6) etc., # reshaping (or viewing) 2x3 matrix as a column vector of shape 6x1Īlternatively, it can also be reshaped or viewed as a row vector of shape (1, 6) as in: In : aten.view(-1, 6) torch is definitely installed, otherwise other operations made with torch wouldn’t work, too. But I get the following error: AttributeError: module torch has no attribute permute. contiguous viewcontiguousvariableviewtransposepermutecontiguous ()contiguous copy. For example, our input tensor aten has the shape (2, 3). I tried to run the code below for training a sequence tagging model (didn’t list all of the code because it works fine). 62, and all experiments were run on an NVidia. Torch.view() reshapes the tensor to a different but compatible shape. Permute Me Softly: Learning Soft Permutations for Graph Representations.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed